Contents

Download

VR Programs Source (F#, MS VS 2015)

Survey Analyzer Source (F#, MS VS 2015)

VRVis on Github

Aardvark.Rendering

Description

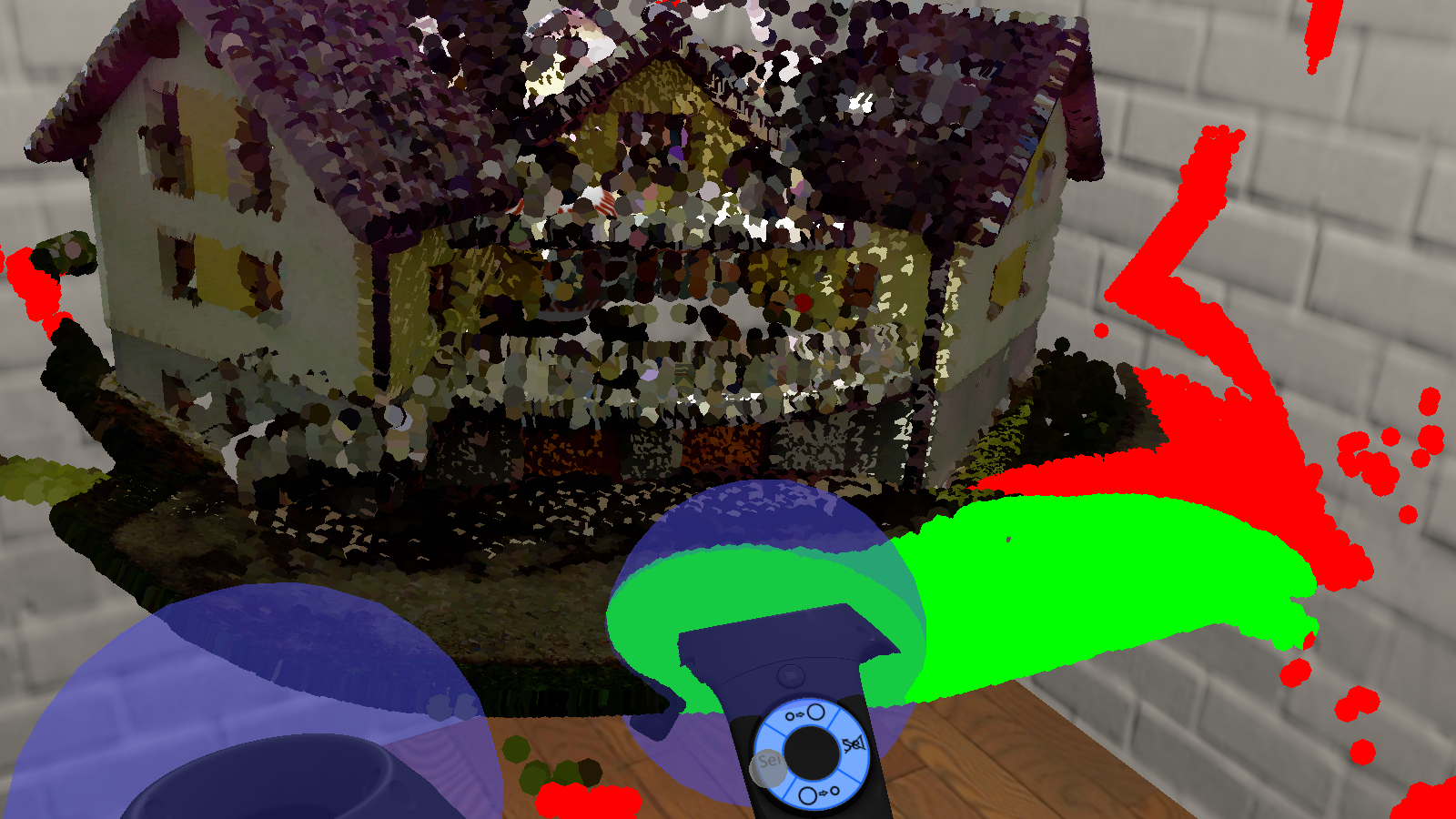

Virtual reality (VR) is now becoming a mainstream medium. Current systems like the HTC Vive offer accurate tracking of the HMD and controllers, which allows for highly immersive interactions with the virtual environment. The interactions can be further enhanced by adding feedback. As an example, a controller can vibrate when it is close to a grabbable ball.

As such interactions are not exhaustingly researched, we conducted a user study. Specifically, we examine:

- grabbing and throwing with controllers in a simple basketball game.

- the influence of haptic and optical feedback on performance, presence, task load, and usability.

- the advantages of VR over desktop for point-cloud editing.

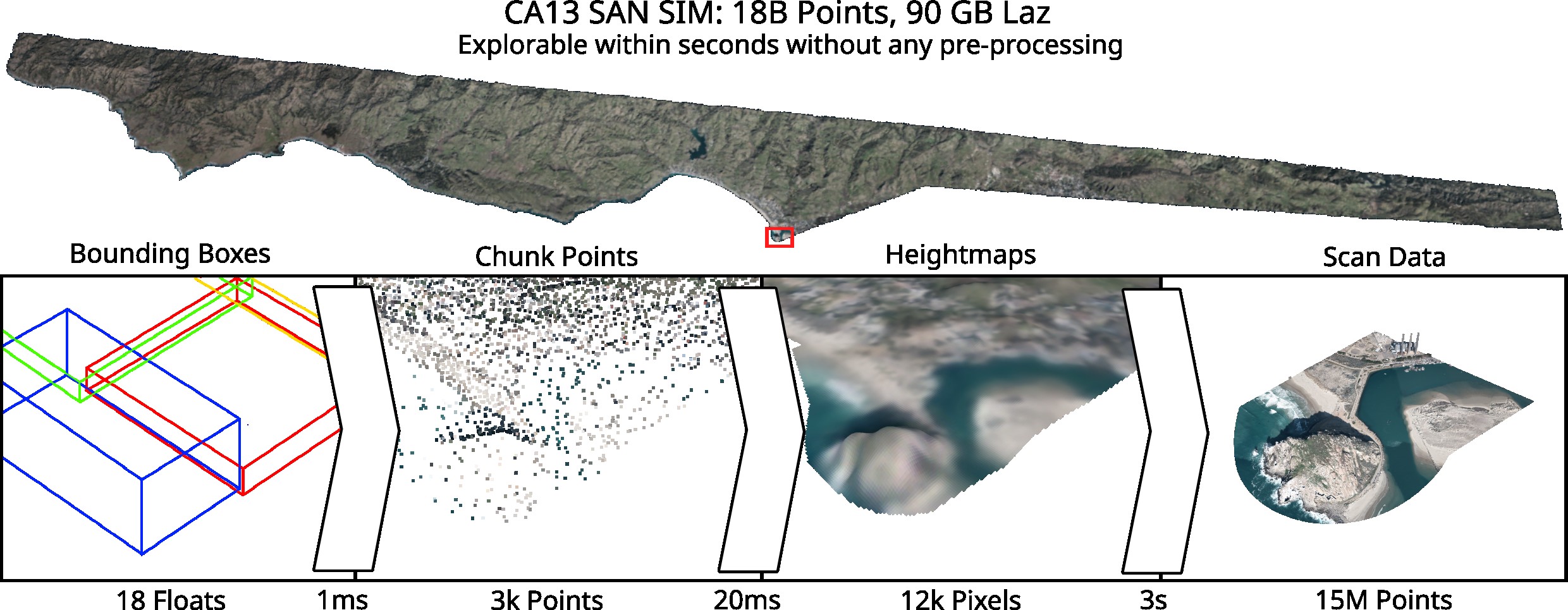

Several new techniques emerged from the point-cloud editor for VR. The bi-manual pinch gesture, which extends the the handlebar metaphor, is a novel viewing method used to translate, rotate, and scale the point-cloud. Our new rendering technique uses the geometry shader to draw sparse point clouds quickly. The selection volumes at the controllers are our new technique to efficiently select points in point clouds. The resulting selection is visualized in real time.

The results of the user study show that:

- grabbing with a controller button is intuitive but throwing is not. Releasing a button is a bad metaphor for releasing a grabbed virtual object in order to throw it.

- any feedback is better than none. Adding haptic, optical, or both feedback types to the grabbing improves the user performance and presence. However, only sub-scores like accuracy and predictability are significantly improved. Usability and task load are mostly unaffected by feedback.

- the point-cloud editing is significantly better in VR with the bi-manual pinch gesture and selection volumes than on the desktop with the orbiting camera and lasso selections.

Credits

Textures, converted to compressed formats

- Painted Brick Wall

- Shiny Wood Parquet Floor

- Dutch Brown Brick

- Wooden Planks

- Tufted Leather

- Marble Polished White 1

- Basketball

- Softball, Beach Ball, Tennis Ball

Hoop model and textures

Point-cloud dataset by VRVis

Sounds, exported as wav with Microsoft encoding